|

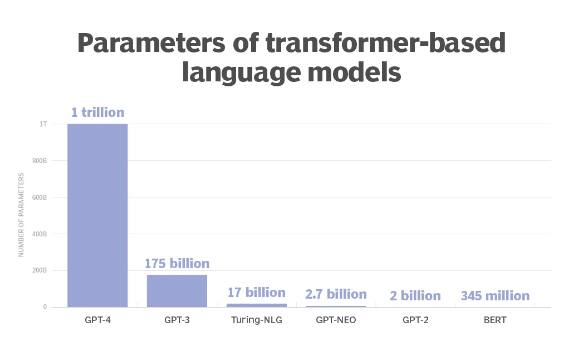

Which Makes Socialism the Only Coherent Economic Future in an AI World by Nathan Newman May 8, 2023 We have a new technology in AI by that is designed to strip-mine the common cultural and scientific capital of our society- and reordering production to ensure people continue to contribute and get compensated for renewing the commons will be a massive political task. So much focus on the dangers of AI is on what jobs will get destroyed, with others arguing technology often creates new jobs out of increased productivity. That’s the history of technology and it’s reasonable to argue AI may not be different from that reality. But where AI is different is that it is clearly a technology that is based not just on increasing the productivity of a particular worker, as did the industrial loom or the assembly line, but makes dominant companies more profitable by, in practice, borrowing the work of other people on an ongoing basis. This means markets will inherently fail to price the value of everything being produced with the help of AI. The company using AI may make money and they may pay their employees (how well being a big issue), but those producing the borrowed knowledge for that production won’t get paid. So the question is less will there be jobs in the future, but instead who will control them and will the people ultimately producing the knowledge we depend on most be the ones making any money in that future? Call it socialism or some other word, but as a society, we will need to deploy substantial non-market policy solutions to address the distortions in our economy that will be the result of expanding use of AI.  The Power of AI is Based on the Amount of Knowledge Borrowed from the Rest of Humanity The newest AI systems like Chat-GPT are based on training what are called Large Language Models (LLMs) - see here for a longer explanation - which involves feeding massive amounts of data into the computers. In the case of the newest Chat GPT-4 system, this involves feeding 100 billion words into the system which gets translated into even greater numbers of variables, called parameters, that respond to user requests to deliver whatever they ask the AI to produce. Other AIs import similar levels of images or other kinds of data for various specialized purposes. In a matter of a few years, the number of parameters in these systems has gone from a few billion to over a trillion. The leaps in power from the recent AI releases are all about the orders of magnitude of additional information used in those systems. People are hearing about AI for the first time in many cases because the amount of information fed into the systems has just crossed a threshold that allows the AI to combine them in ways that effectively mimic what a human being would produce. The core technology is impressive but it’s the degree of scavenging of other peoples’ work that creates the awe-inspiring results people are talking about. Whether delivering an essay on a particular topic, writing a short story, creating s new image with customized features, or writing computer code for specific purposes, Chat GPT and other AI systems clearly will be able to replace a wide range of tasks currently done by human beings. Even if the final product has limits, it can save time by producing a good first draft that may just need refining and fact-checking by a human expert. For average users not just looking to cheat on their homework, one of the most popular AI tasks has been creating eye-popping visual images, taking a few words of text describing the goal, and producing a digital picture or even an animation from those words. But the inspiration for these images has to come from somewhere and many artists are up in arms complaining about their art being used as part of the training data. Even though no images are exact duplicates of existing art, advocates identify patterns of inspiration that seem to influence the results. See this Vox video for more on how the process works and some of the debate among artists. Lack of AI Sentience is a Disappointment for Many- but Copying Has Always Underlied Intellectual ProgressStill, merely noting the borrowing of ideas in modern AI doesn’t solve the problem of how to economically recognize the contributions synthesized into the final product. Many imagined that artificial intelligence would come from machines given super-intelligence that would understand the world so well that they would perform tasks far better than humans. That current AI is based on synthesizing the work of human beings with no apparent self-awareness is kind of a letdown with those expectations. Since IBM’s chess-playing computer, Deep Blue defeated then-world champion Garry Kasparov in a 1997 match based on brute-force calculations, most of the AI world reluctantly admitted that large-scale calculations would win out over attempts at self-aware machines- and Chat GPT and similar systems are the result. However, the disappointment may be misplaced, since the training of AI systems is closer to how “genius” humans operate than we want to admit. Malcolm Gladwell in his book, Outliers, detailed how research had shown that experts in every field often distinguish themselves by their time spent practicing and learning from others in their field- what Gladwell popularized as the “10,000-hour rule” for the amount of time spent by identified experts, whether athletes or artists or scientists, to claim top spots in a field. A famous example is Bill Gates who was in a position to pioneer software in the personal computer field because as a teenager he had unusual access to a computer at the University of Washington, where he had a chance to rack up thousands of hours of practice absorbing the best ideas in the field when that was impossible for others his age. Some inherent skill and tenacity in pursuing dedicated practice matters for human beings- but that’s what sheer computing power can deliver for the computer equivalent. That genius comes from exposure to others’ work is hardly a new concept. Isaac Newton is one of the certified geniuses of the last millennium - and he readily argued his ideas came from “standing on the shoulders of giants.” While Newton invented calculus, critical to modern math and science, so did Gottfried Leibniz in the same period, reflecting their joint exposure to the emerging new pool of mathematical knowledge being developed in the 17th century. Calculus was less the result of individual genius than the inevitable synthesis of the work of the new herd of emerging mathematical endeavors in that period. Pablo Picasso was blunter about the dependence of genius on others- famously saying “Good artists copy, great artists steal.” His own cubist artistic innovations were heavily influenced by exposure to the art of African masks only recently made available (via colonial plunder) to the European art world. And no, Africa was not compensated for the art inspired by their work. The dilemma is such borrowed innovation has become ever more central to the economy. Google built a trillion-dollar-plus business based on the singular insight that computers were too stupid by themselves to decide what was worth linking to in a search engine. Instead, Stanford students Larry Page and Sergey Brin decided to survey what other humans decided was worth linking to on their web pages, then Google would prioritize links with the most sites linking to them from the sites that other people linked to- the chain of links borrowing the judgment of millions of people to decide what was worthwhile on the web. The problem was that by linking directly to articles, Google links bypassed the front pages of newspapers and ended up gutting the advertising revenues of newspapers and other publications. Magazines and newspapers had themselves always been the aggregators of the insights of their own writers, picking up income as readers’ eyes passed over advertisements as they moved from one article to the next, compensating writers (usually poorly) with the proceeds. The disaggregation of article links on the web means that Google gets advertising revenue while sharing none of it with those they link to. The correlation of rising Google revenue with collapsing newspaper revenue is clear. Newspapers may try to compensate by piling popup ads into the middle of articles, but that increasingly unpleasant reading experience reflects the growing market failure of the modern knowledge-based marketplace. What happened to newspapers is likely to happen to a wide swathe of industries as AI tools make it far easier to borrow the product of others in any industry for no compensation. You can’t have functioning markets if the price of many skills becomes zero or near zero for many companies borrowing them via new AI tools. Market-Based Policy Tools Can’t Save an AI-Dominated Economy Where Innovation Externalities Go UncompensatedTaking a step back, a technological advance that lets so many more people benefit easily and cheaply from knowledge is an incredible gain for humanity - and is only a “problem” because we are depending on markets to compensate those producing the original knowledge being shared. Economics deals with this kind of “problem” under the label of externalities - the idea that many kinds of production either have costs not contained in the price, like pollution, which get labeled “negative” externalities, and other kinds of production have broader social benefits not captured in the market price, so-called “positive externalities.” Negative externalities are theoretically addressed in economics by any policy that forces companies to internalize those costs, whether through regulation or taxes on those factors of production with negative impacts. The failure to enact effective carbon taxes shows this is more of a theoretical success for economics, but there is at least a market-like approach for solving the problem. But economists have never had a clear market-based policy solution to positive externalities, usually encouraging some form of government subsidy for activities with such positive gains for others. Education is the clearest example of something that benefits the person receiving it but also helps society as a whole through higher productivity and a higher-skilled workforce. Older systems of master-servant relationships had internalized the educational process in those trades, with the master paying for the training, then recouping some of those costs through the apprenticeship period of work. In the industrial period, workers were moving from job to job too often, so employers had far less to gain from paying for multiple years of their workers’ education so people were left on their own to pay for education. Because the societal benefits of education were now diffuse, most nations implemented public schools as the solution, the first mass socialist program of the 19th century across modern nations. That solves the incentive problem of people seeking education or other goods whose value benefits others but doesn’t address how those benefiting from those externalities pay for it. In the past, businesses at least had to hire workers to gain access to the skills acquired through education or past on-the-job learning, but in the age of AI, the 10,000 hours of learning by experts across a field are easily borrowed by companies with no compensation needed. One way to understand new AI systems is they are incredible engines for plundering positive externalities across the economy. Can’t Intellectual Property Laws Save Markets in the Age of AI?Some hope we can tweak intellectual property laws to make sure those whose work is borrowed in training AI systems get paid, creating some version of micro-transactions to maintain a market in innovation. Three artists have already filed class action lawsuits against multiple generative AI platforms for using their work to train AI systems without permission. The problem here is that the bar for proving intellectual property infringement is high for good reasons. First, to be a violation, work has to be copied wholesale with no substantial transformation and the original work copied itself has to be original. AI systems do transform and synthesize other works, so any legal standard that said, for example, that a derivative work based more than 50% on another work is a violation would just lead to AI systems building in a 49% ceiling on borrowing from any particular work. And there is such a wide range of day-to-day innovation that we have never treated as patentable but that gets embodied in the brains of experts in every field. The messy law of trade secrets has been used in some cases to try to value the ideas falling below the threshold of patent and copyright protection, but usually in ways that have more harmed the rights of workers to leave their employers than to effectively compensate those creating that innovation. If anything, the law is moving away from trade secret protection with the FTC recently proposing restricting the use of employer non-compete clauses and tightening when allegations of misappropriation of trade secrets would be allowed Some of the lawsuits against AI systems argue it should be illegal for the AI systems to even view borrowed art or other work without getting permission first. But if it is illegal for AI models to scrape knowledge of many art images or computer code or literary works, would it then be illegal for human artists to store images of other art in their brains and be inspired by it to create their art or other works? Using the law to stop borrowed innovation is a double-edged sword since tightened standards might easily be met by an AI system, but applied to human innovators might lead to rabid fear of lawsuits, kill what is known as “fair use” in the intellectual property field, and undermine the actual benefits of positive externalities in innovative fields that are dependent on creative borrowing. And it’s not really a market system if we end up with an endless parade of lawsuits where courts analyze and assign monetary values to each innovation. That’s just pretending unelected judges are not acting as government regulators - and assumes that judges largely lacking content expertise in a field would do better in assigning compensation than experts appointed by the executive branch in rewarding artistic, scientific, and other forms of innovation. Trying to identify the daisy chain of measurable influence on innovation by others is a bit of a fool’s errand. The Velvet Underground was a notoriously uncommercial band, but as music producer Brian Eno is reported to have said, "The first Velvet Underground album only sold 10,000 copies, but everyone who bought it formed a band." Would the group have had to sue those 10,000 bands to recoup the real value of their contribution? Take this problem into the world of computer code, legal writing, and all the other areas where AI is likely to have an impact on undermining compensation for innovation and expertise - and the problems multiply in trying to have the legal system decide these issues. And do progressives really want John Roberts’ Supreme Court to have the final say on who gets compensated in our economy? Better to find some other approach to rewarding valuable work untethered from micro-transactions for each thread of its influence. Encourage a thousand flowers to bloom and let the AI aggregators/synthesizers produce the tools that improve all our lives. How about Using Antitrust to Stop the Concentration of Economic Power Due to AI?Some might hope more vigorous antitrust enforcement could give those producing new innovations a better chance against dominant players using AI to scavenge their work. That the two companies most identified with the new AI push— Microsoft via its role supporting Chat-GPT and Google deploying its Bard AI — have both been targets for antitrust action reinforces the idea that antitrust might help save market competition as a tool to manage the future. There’s little question that the economy is increasingly concentrated in fewer corporate hands. 75 percent of U.S. industries have increased their concentration levels over the past two decades, driven by increasing returns to scale and network effects that have reinforced the advantages of scale. Conversely, business startups have fallen off dramatically across industries over the last few decades, as this Brookings graphic highlights. Many startups see their goal as less becoming viable companies than getting bought up by big fish in their industries with a big payday for their founders. One impact of market concentration noted by analysts is a long-run decline in U.S. business investment, which has fallen to less than half of its level in the 1970s, which is consistent with the idea that firms are increasingly benefiting from absorbing positive externalities of others from outside their firms rather than from returns on internal investments. Tech activist and author Cory Doctorow has described what he calls the enshittification of economic and social activity on digital platforms, as users and businesses, and employees are lured into the use of such platforms, then their contributions are drained into the pockets of shareholders as the experience of everyone else gets worse. Yet I am extremely skeptical antitrust can return us to some ideal where market competition delivers greater equity for all actors in the economy. I say that as someone who spent a few years in the 1990s at a group called NetAction arguing for antitrust action against Microsoft and spent a chunk of the last decade writing about and advocating for antitrust action against Google and other tech platforms. Antitrust can only really work if monopoly practices have undermined fundamentally sound markets, while all evidence is that it is the breakdown in the functioning of markets that has empowered monopoly. A large part of that has been due to the scavenging of positive externalities from others driving economic concentration, as the rise of Google described above reflects. And the coming explosive use of AI technology is only going to accelerate that dynamic across far more industries. Breaking up a few whales into a gang of slightly smaller barracudas isn’t going to reduce the scavenging of others’ work and expertise or improve economic equity in the overall economy in the age of AI. Antitrust can eliminate some of the worst practices of enshittification (in Doctorow’s colorful phase) and restrict other anti-social behavior by the currently dominant players, one reason I am still enthusiastic about the “new antitrust” advocates like current FTC chairwoman Lina Khan. However, antitrust is ultimately a limited tool in addressing the fundamental problems that will expand in the coming AI-driven economy. Where to Start with Policies for the New AI Economy I’m not going to lay out a full program for socialist renewal of the economy in a post-AI world - I’ll leave that to future essays - but a few key principles are clear.

Many liberals have tended to treat socializing education and health care as a small diversion from market economics, but when you have to deal with compensating for the distortions AI will be wrecking in the core of the economy, we are talking about more than social democracy or however people might want to characterize Denmark. AI can be one of the greatest technological gifts possible for humanity if we deploy it in the right way. Or it can just gobble up the seed corn of innovation while leaving little left for future harvests as inequality grows across the economy. That is going to be the major economic and political battle for the coming era.

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

Principles North Star caucus members

antiracismdsa (blog of Duane Campbell) Hatuey's Ashes (blog of José G. Pérez) Authory and Substack of Max Sawicky Left Periodicals Democratic Left Socialist Forum Washington Socialist Jacobin In These Times Dissent Current Affairs Portside Convergence The Nation The American Prospect Jewish Currents Mother Jones The Intercept New Politics Monthly Review n+1 +972 The Baffler Counterpunch Black Agenda Report Dollars and Sense Comrades Organizing Upgrade Justice Democrats Working Families Party Poor People's Campaign Committees of Correspondence for Democracy and Socialism Progressive Democrats of America Our Revolution Democracy for America MoveOn Black Lives Matter Movement for Black Lives The Women's March Jewish Voice for Peace J Street National Abortion Rights Action League ACT UP National Organization for Women Sunrise People's Action National Network for Immigrant and Refugee Rights Dream Defenders |

RSS Feed

RSS Feed